The ASR33, like most teletypes of the era, works at a fixed rate. It does 10 characters per second. It is 110 Baud, using 1 start, 8 data (inc parity), and 2 stop, so 10cps Tx and 10cps Rx; 10cps printing; 10cps punching tape; 10cps reading tape; 10cps maximum typing speed. Everything happens based on one motor that does this 10cps working, engaging clutches to start an operation which completes in one turn. So everything has to happen within 100ms, well, sort of.

There is one exception: carriage return [CR]. The carriage is released within the 100ms time, but the carriage is on a spring, and does not get back to the left within 100ms if it is too far over to the right. The usual fix is to send another non printing character, such as a line feed [LF], as the next character, where CR and LF are used for each new line. The paper advances whilst the carriage is returning so giving the carriage a whole 200ms to complete the return. This allows enough time to get to the left, but can leave the carriage still bouncing and mean the first printable character is not well aligned. The fact that 200ms is enough is usually fine, unless you are particularly fussy. The fix to this is to send another non printing character, such as a NULL, another CR, or even a rub out [RO]. The standard HEREIS drum coding even specifies CR, LF and RO at the start.

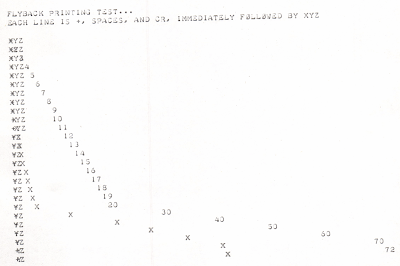

If you do send characters immediately following a CR, you get them printed in the fly-back of the carriage, like this...

The whole “new line = CR+LF” thing has plagued computing for the last 6 decades, with some systems and file formats using CR as new line, some LF, and some CR+LF. Even today I can find I have a file in DOS format using CR+LF that needs converting.

The software I have written in the controller allows for this when printing text itself, doing CR, LF for new line, and adding a NULL if beyond 40 columns at the time. This works, and is used for prompts and even the Colossal Cave game that is built in.

However, this presents a few issues :-

- This works when generating text, but if in some sort of raw mode it mean the sending device needs to know to do this. Some systems know, some do not.

- If this is done automatically, so extra characters are inserted that is not a problem for printing, but for punching paper tape it records those extra characters. That said, a NULL or RO on paper tape should not normally be an issue when read in. But it is not ideal, and assumes it is not binary data on the paper tape for some reason. So you sort of need a raw or processed mode for handling such tape, which is messy.

- Paper tape is sometimes used for raw / binary data, but can also be used for “large text” or patterns (which is gibberish as printed). Whilst fun, this also has a practical use to label reels of paper tape at the start in a human readable format. If automatic extra characters for CR handling are added, they mess this up.

A new solution to an old problem...

My solution works because we are now using a soft UART. I am doing this, working the individual data bits on a timer interrupt, to allow the extra low Baud rates used by some teletypes, which are not supported by hardware UARTs. Some work as low as 45.45 Baud even.

The soft UART has now been coded so that the bytes, as actually sent to the teletype (i.e. not when buffered/queued, but in real time), are tracked to know carriage position, and operate a timer between sending a CR and the next printable character. A non printable character like a NL, NULL, RO, are allowed within this timeout, but the timeout has to finish before the first printable character after a CR. This allows the CR to complete and avoids printing a character during the fly-back of the carriage.

This means :-

- Where the sending device is sending the extra characters, even just a CR, LF, to allow for CR, then the operation is totally unchanged. This makes it 100% backwards compatible with existing working of teletypes and means no special raw or processed modes needed.

- Where the CR needs this extra time it can be done in sub character, even sub bit, periods, not wasting the time for a whole extra character such as a NULL.

- The delay can be adjusted for the position of the carriage, and hence allow just the time it will take to complete the return.

- This works even when CR is immediately followed by a printable character (causing a pause you can just hear on longer lines).

- No extra characters are actually inserted, so characters as punched on paper tape are perfect, no gaps or ROs, etc.

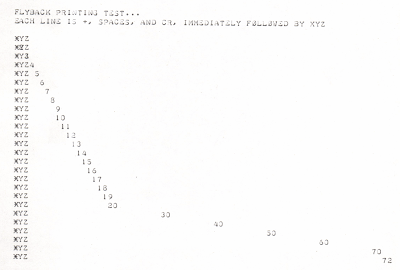

This works well, even with a printable character immediately following the CR, like this...

Today I learned...

What is funny (well, for me) is that there will be people who have worked in computing and IT for decades and encountered the whole craziness that is CR and LF, but have no idea why it was ever a thing. I hope this, in some way, explains some of the background.

You're welcome :-)

[thanks xkcd]

P.S. I cannot say for sure that the way teletypes work is why CR and LF are separate. They were around at the time ASCII was being developed - heck my teletype does not even have lower case as it is 1963 first ASCII version. So the way they work is quite likely to be a factor. That said, CR and LF as characters dates back even further than ASCII. It is true that CR being separate has been used for things like over printing underscores, even over printing hyphens many types to make a perforation, and other tricks, but I suspect those tricks are all a consequence of CR being separate and not the reason for it. Even the older manual typewriters would have a manual carriage return level that would typically do the line feed at the same time - so it seems odd they would be made separate characters if not for this reason. It is odd how, what may have seemed trivial at the time, such a decision still impacts computing today. At the very least, you can see why it is CR then LF, and not LF then CR, in files.

Wow, thanks, I had no idea it was down to something like this. What still confuses me is how DOS chose CR+LF while UNIX decided that LF was sufficient. Given the age of UNIX, you'd think it would be the other way round!

ReplyDeleteYou probably want to disentangle the new line sequence used in programming languages and file structures from the actual sequence sent to any particular device. In general, they're different and device drivers and libraries handle the mapping.

DeleteIf I was to guess, DOS used the sequence that the simple peripherals likely to be connected to it at the time used, avoiding much device driver translation. Unix, being older, dealt with earlier peripherals with a wide variety of ways of representing new line, and probably consistent with its view of device independence, went for a single simple way to represent this at the programming level and in its byte-stream files.

Meanwhile, Internet line format -- quite likely to be fed directly to ASR33 teletypes -- went with CR/LF, no doubt for exactly that reason (I should ask around and find out, since I still know a few people who were around at the birth of the net).

DeleteIndeed, somehow we have managed to move from ASCII to UTF-8 in lots of places, but still a simple web page is meant to have CR+LF on end of every header line. LOL.

DeleteI worked on terminal driving firmware on a minicomputer which was old enough to have originally supported ASR33's. The firmware in the UART controller adds in a NUL after every CR by default if it's driving an ASR33. There's a option to increase the number of NUL's on specific devices (maybe they get sluggish if they get dirty?).

ReplyDeleteMy introduction to computing in 1963 as s student was on an Elliot 803 computer which used five channel paper tape punched on a Creed teleprinter. It had both cr and lf as well as ls (letter shift) and fs (figure shift). I recall a machine code programming exercise to produce letters and numbers as readable characters on paper tape.

ReplyDeleteI think that in the 5 bit character systems the number (figure) shift (fs) and letter shift (ls) were used as timing delays. The barrel head would rotate 180 degrees back and forth without printing anything when it received a series of ls fs characters.

ReplyDeleteTypewriters use a lever that first applies a "LF" and then its pushed to the start as a "CR"

ReplyDeleteYou can do either individually too.

I guess the earliest devices were based on existing typewriter designs, so CR and LF separated

But why did it become a CR/LF rather than the LF/CR of typewriters?

On the teletype it is the CR that takes too long do needs to be followed by a non printing character.

DeleteThe trick don't work when simultaniusly print paper and punching tape. When later reading the tape (and printing) you need the delay on the tape.

ReplyDeleteIn those days punched tape was used as storage...

/hjj

CR and LF as separate control characters originated in 5-bit Baudot ITA1. Apparently this system was primarily intended for paper-tape output, where CR and LF could be omitted entirely (the first version of Baudot didn't have them), but with an eye towards future keyboard-and-printer teletypes.

ReplyDeleteMy first "printer" was a Murray code (modified Baudot) teletype. The baud rate was so low (45.5 bps) that I used a relay to drive it from my AIM-65.

The earliest reference to the CR LF order that I have found is 1954, ‘A Teletypewriter Manual: How to Operate the No. 15 Teletypewriter’ at https://archive.org/details/TWXOperateNo15Teletypewriter1943_1954

ReplyDeleteIt says: “TO BEGIN A NEW LINE, press the CAR RET LINE FEED LTRS KEYS in this order. This returns the carriage to the beginning of the next line ready for typing letters in lower case. If the next line is to start in the upper case use the FIGS KEY instead of LTRS. The LTRS or FIGS KEY operation provides time for the carriage to return to the left margin on all connected teletypewriters before the next character is typed.”

(Note that ‘lower case’ here means capital letters and ‘upper case’ means digits and punctuation.)

Typewriters existed long before teletypes did.

ReplyDeleteTypewriters have separate gears for CR (right hand coil lever) and (variable) line feed (left toothed knob) since almost ever.

Default engagement tied LF with CR so operating the lever would result in a CR+(variable)LF.

One could also disengage them to do CR with no LF and re-type on existing line.

Or LF with no CR and do staircase typing.

My Olivetti from the 80s still works like that.

All those mentioned tricks and many more (underlines, overstrikes, weird characters etc) existed long before teletypes existed.

So I think it's not news to separate CR from LF: it could have been retro-compatibility, especially because teletype operators usually were typewriter operators too.

And while still holding true for all those electro-mechanical constraints, I strongly think those were more an effect (of compatibility with typewriters) than a cause.

Found your comment. Typewriters used to allow half line feeds (for super or sub script maybe?) if I remember correctly. I have not found any reference to doing so for control chars.

DeleteI remember one in late 70s for overlapping ASCII art and I wrote a bad script to emulate it a few years back..

https://www.reddit.com/r/typewriters/comments/nliu53/typewriter_art_question_text_art_with_half_a/

If anyone knows anything about half line feeds, please let me know!